|

I hope my simple approach of identification followed by correction will help newcomers in tackling these effectively. These are only three of the most common types of errors faced during data cleaning. replace(column_name, percentage > 100, 100) The following input will replace all values in the column greater than 100 with 100. For example, we can replace an erroneous data value of 104% with 100%. In this case, the range is defined as 0= 0, percentage 100, NA)įinally, the out of range values can be replaced by the range limits. For example, the percentage in a test is limited to values between 0 and 100. In some cases, data is limited to a specific range. library(stringr) str_remove(column_name, ",") 3. It takes the target column/string as the first argument and the character to be removed as the second argument. The str_remove function can be used to correct such a data error. This is possible through stringr which is a powerful string manipulation library. While these are helpful characters and help identify large numbers efficiently, a possible error is that data might be read as a character type.Ī) Correction In this case, the unnecessary characters must first be removed, and then the data type must be changed to the correct data type. In an untidy data set, numerics may be imported along with commas or percentages. For example, if a numeric data type has been incorrectly imported as a character data type, the as.numeric function will convert it to numeric data type. is.numeric(column_name) is.character(column_name)ī) Correction After all the incorrect data type columns have been identified, they can simply be converted to the correct data type by using the as functions. If a numeric column is an argument for the is.numeric function, the output will be true, while if a character column is an argument for the is.numeric function the output will be false. I have only mentioned the common is functions, but it can be used for all data types. The is function can be used for each data type and will return with a logical output (true/false). library(dplyr) glimpse(dataset)Īnother form of logical checks includes the is function. Glimpse will return all the columns with their respective data types. The glimpse function is part of the dplyr package which needs to be installed before glimpse can be used. For example, a common error is when numeric data containing numbers are improperly identified and labeled as a character type.Ī) Identification Firstly, to identify incorrect data type errors, the glimpse function is used to check the data types of all columns. When data is imported, a possibility exists that RStudio incorrectly interprets a data column type, or the data column was wrongly labeled during extraction.

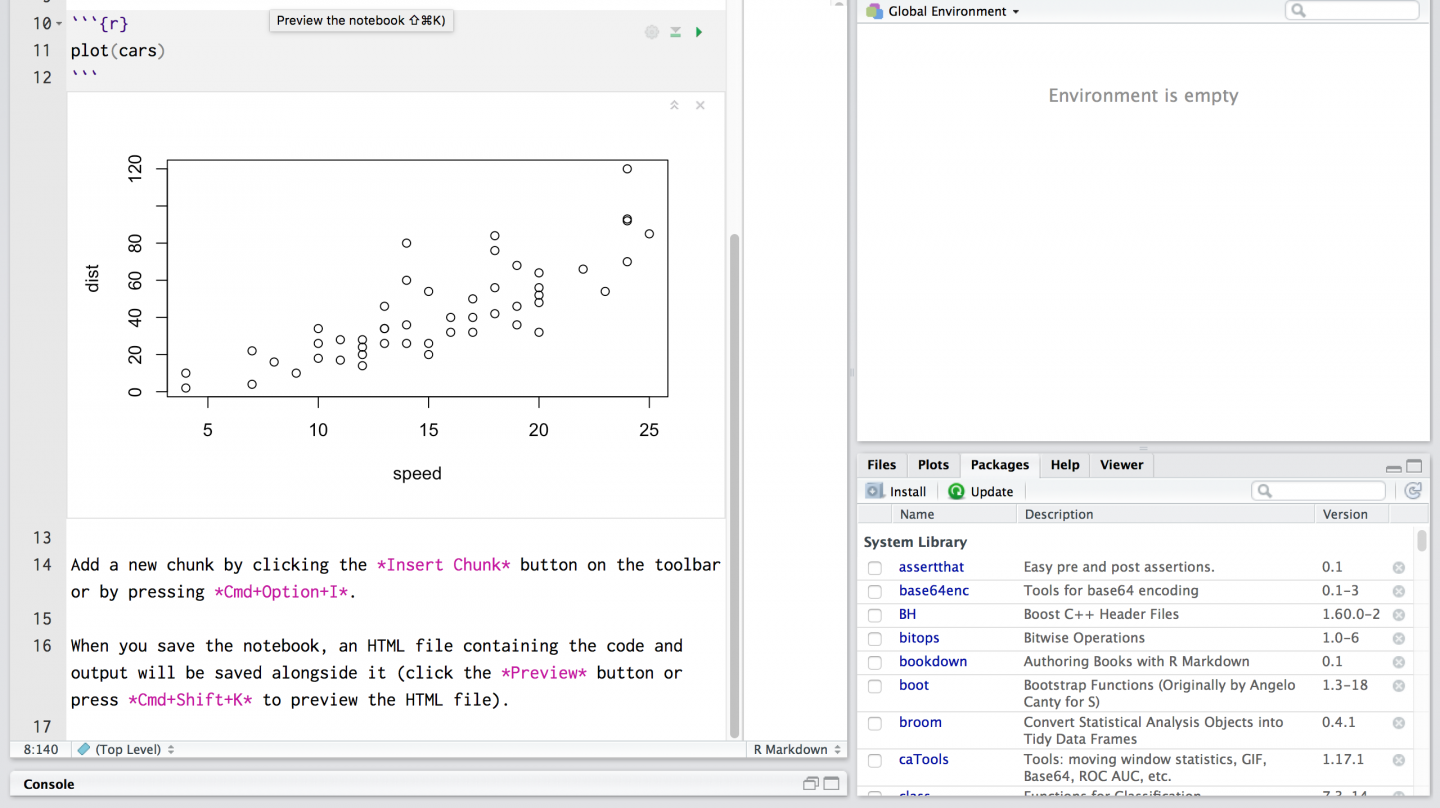

These can be installed by simply writing the following code in RStudio: install.packages("tidyverse") install.packages("assertive") 1. I will also be introducing some powerful cleaning and manipulation libraries including dplyr, stringr, and assertive. I will briefly describe three types of common data errors and then explain how these can be identified in data sets and corrected. This guide will serve as a quick onboarding tool for data cleaning by compiling all the necessary functions and actions that should be taken. Thus, if untidy data is not tackled and corrected in the first step, the compounding effect can be immense. Ultimately, the insights generated that are used to make critical business decisions are incorrect, which may lead to monetary and business losses. The variable text, containing a sentence, is shown in the script.Errors and mistakes in data, if present, could end up generating errors throughout the entire workflow. Every time we introduce a new package, we'll have you load it the first time. Going forward, we'll load tm and qdap for you when they are needed. Tolower() is part of base R, while the other three functions come from the tm package.

stripWhitespace(): Remove excess whitespace.removePunctuation(): Remove all punctuation marks.tolower(): Make all characters lowercase.For example, the words used in tweets are vastly different than those used in legal documents, so the cleaning process can also be quite different. Specific preprocessing steps will vary based on the project. For example, it might make sense for the words "miner", "mining," and "mine" to be considered one term. In bag of words text mining, cleaning helps aggregate terms. First, you'll clean a small piece of text then, you will move on to larger corpora.

Now that you know two ways to make a corpus, you can focus on cleaning, or preprocessing, the text.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed